Will Machine Learning and Artificial Intelligence diverge into separate disciplines?

In 20 years, ML will be to AI what biology is to medicine, economics is to finance, and hardware is to software

Want to try out Slingshot AI for your next ML project? We have collaborative tools for end-to-end ML development. Sign up at slingshot.xyz or email me at daniel@slingshot.xyz. I’d love to deep-dive with you and hear about your latest ML projects!

I got into a debate this week with the founder of a Series B ML company. He argues that machine learning is still a strict subset of AI. I say that while that's historically true of the two disciplines, machine learning now powers AI, and over time, the two will live on as tightly connected fields - like biology and medicine, economics and finance, and hardware and software.

I’ll make my case, and you tell me what you think,

Artificial Intelligence and Machine Learning

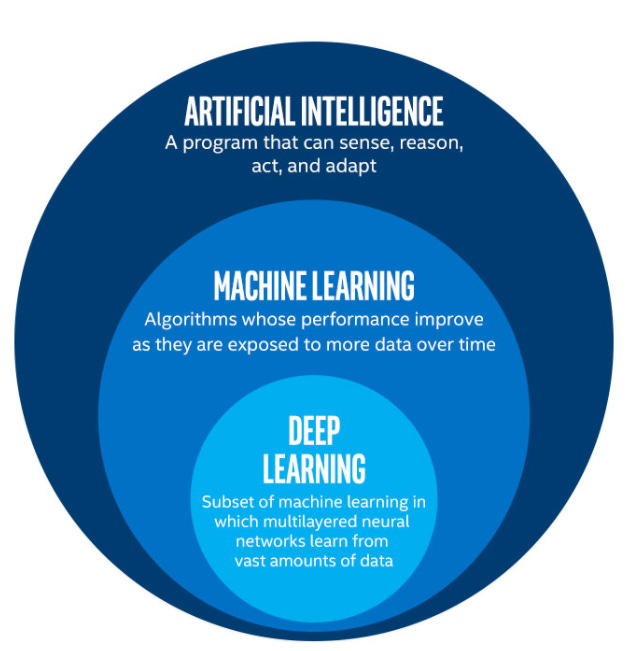

Virtually every Intro to ML lecture will start with a slide like the one below explaining the relationship between AI, ML, and Deep Learning.

AI as a parent field can be understood narrowly or broadly. In the broad conception - AI refers to a wide range of “problem-solving” algorithms and machines. Here, algorithms that route internet traffic may be considered intelligent, and machine learning is just a particular category of intelligent algorithms. But are intelligent algorithms AI? And would anyone really call routing algorithms intelligent?

On the other hand, in the narrow understanding, AI has historically been an objective. Machine learning was predominately founded as a subfield aiming to achieve AI. It remained a subfield because there were other potential routes, such as within the logic-inspired AI paradigms that treat reasoning, inference, and knowledge as central primitives for producing AI.

Implicitly, the latter is what most developers believe. Machine learning serves strictly to unlock AI, and once we’re there, machine learning will essentially be obsolete.

Machine Learning powers AI

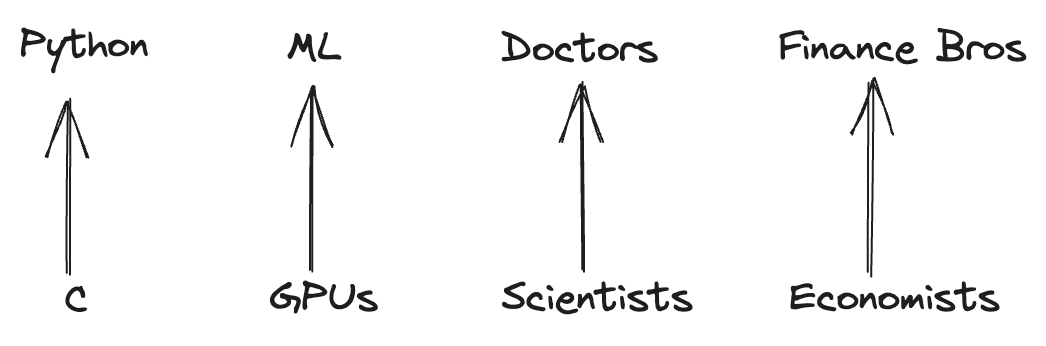

Most Python developers run their code on the CPython virtual machine. Most Python developers also don’t know C. And yet, they benefit from every change that C developers make to improve the CPython Runtime.

ML developers run their code on GPUs. Most ML developers are not GPU developers and are thankful for how little they need to think about the CUDA runtime. They’re also grateful for every GPU advancement that unlocks their next development frontier.

Doctors prescribe drugs, but few of them do the research that brings about new ones.

In the early days, game developers probably thought they were the gamers. Many game developers are gamers, but the vast majority of gamers are not game developers.

Machine learning is the dominant (read: only) approach to producing AI artifacts. It’s analogous to how all lawyers go to law school. That’s how you become a lawyer (except on Suits). But when you hire a lawyer, you couldn’t care less about their law school experience - just how well they can do their jobs.

Similarly, harnessing AI does not require a complete understanding of how the model artifacts being used were created. Though such an understanding is likely very helpful, it’s far from obvious that, for example, the best users of ChatGPT are actually machine learning engineers.

Machine Learning isn’t going anywhere

Scientists at DeepMind trained AlphaFold to predict how protein sequences would fold. Is that AI, in the same way as a model that could engage deeply in the logic of magic in the Harry Potter universe? Probably not, but it’s a whole lot more useful.

AGI will use machine learning. Even post-AGI, we’ll see machine learning models increasingly used for use cases like fraud detection. The models will continue to learn patterns from vast amounts of data and perform far better than “AGI-style algorithms like GPT. The models might be trained, deployed, and operated by AGI, even for their exclusive consumption (ideally, all done on Slingshot).

Outside of neural networks, the most popular machine learning models are tree-based. A decision tree is an algorithm with a structure that’s learned rather than programmed - but it’s hardly AI. Play with definitions as you will, but fraud detection models could seldom be called “intelligent.” They can't perform cognitive tasks - but they take in features in some high-dimensional latent space and perform a small number of narrow, valuable tasks with it.

There will also be machine learning engineers who focus on many narrow AI models like LLMs and large image models, focusing on specific high-value use cases where general-purpose models underperform - for example, AI agents for mental health support.

And, of course, there will be many machine learning developers who focus exclusively on machine learning for general-purpose AI.

So what is AI, then?

Defining AI is tricky primarily because artificial intelligence has been a moving target, definitionally speaking. The phrase “AI Effect” has come to refer to how experts have historically redefined AI constantly with each new technological advancement to exclude whatever recently became achievable by computers. First, you’d say that if your computer could play chess - surely it would be AI! But the day it beats the grandmasters, we look back and say - nope, it’s just doing a bunch of computations.

In 2021, Yoshua Bengio, Yann LeCun, and Geoffrey Hinton - commonly known as the godfathers of AI - co-wrote their Turing Lecture on Deep Learning for AI. The title shows their understanding of deep learning as a tool for or a path toward artificial intelligence. And yet, it’s worth noting that in the paper, they don’t bother to define AI - presumably because doing so would be pretty futile and not very useful.1

So why does any of this matter?

This debate may sound esoteric, but it represents some fundamental questions about the future of AI.

In the last year, AI has quickly become part of the everyday vocabulary as it refers to existing, widely available technologies. ChatGPT and Midjourney are frequently considered AI tools, except by the most pedantic experts who reserve “intelligence” for their preferred definition.

AI is a meaningful term in that it refers to some things and not others. Github Copilot is AI, but elevator algorithms in skyscrapers just aren’t (yet).

And it matters because if machine learning and AI do diverge, it’s likely that ML developers won’t be the same people as AI developers. Weights & Biases, HuggingFace, and Slingshot will increasingly become a machine learning tech stack. They will be used to support AI, and AI applications will be built on top.2

Debating the definition of artificial intelligence is indeed a prescriptivist heaven. Prescriptivists are the folks who like to define language strictly and then tell you you're misusing a word because you're not following their favorite definition of it. When I say, “I’m literally freezing to death,” the prescriptivist corrects me: “You mean figuratively, not literally.” A descriptivist (most of us) would be on safe ground to respond, “Literally literally means figuratively. [So shut up.]”

Many of the newest .ai companies already do little or no machine learning (which is perhaps premature / a mistake).